Arcanum Ventures

Arcanum Ventures is a venture capital investment firm, blockchain advisory service, and digital asset educator. We bring precise knowledge and top-tier expertise in advising blockchain startups.

Arcanum demystifies the blockchain space for its partners by providing intelligent, poised, crystal clear, and authentic input powered by our passion to empower and champion our allies.

We unravel the mysteries and unlock the opportunities in blockchain, Web3, and other emerging innovations.

“Is This Real?” How Data Authentication Might Save The Internet

Seeing Is No Longer Believing

Some weeks ago, people on social media were debating whether a video of Benjamin Netanyahu was real.

The speculation exploded after a livestream clip led viewers to claim he appeared with six fingers, a visual glitch often associated with AI-generated imagery. Since then, a follow-up “proof-of-life” video was posted, but that only made things worse. It gave the internet a fresh round to dissect and doubt the authenticity of the footage.

This perfectly sums up the reality of the AI era.

The technology is advancing so rapidly that fake content can look completely real, while real content can be dismissed as fake.

The video above shows just how easy it has become to assume a completely convincing digital identity. People can shift seamlessly between real and fictional personas – influencers, public figures, world leaders, and also entirely fabricated characters (where the data for that is collected from is an entirely different issue). The glitches that once gave AI away are now getting fixed. These digital twins can now move naturally through physical space. Fabric looks more realistic, and subjects can now interact with objects in ways that appear to follow real-world physics. On top of that, voice generation trained on real-world samples is becoming increasingly realistic, making it even easier to fabricate someone’s identity.

If this isn’t terrifying, we don’t know what is.

Misinformation, Disinformation, and the Collapse of Trust

Remember when the internet “only” had a credibility problem? In 2026, those days are now missed. Back then, most content still came from real human beings, and as a consumer, you needed to decide whether the source was trustworthy. What we are dealing with today is a much worse problem, it is an authenticity problem. One where you have to ask yourself “is this even real?”.

The authenticity crisis of the modern internet is accelerated by the following mechanisms:

Misinformation is false information spread without intent. Disinformation is false information spread deliberately. As sites optimize for engagement, false content ends up flooding the system, making it harder to verify reality.

In a widely cited 2018 Science study, Soroush Vosoughi et al. found that false news spread faster and more broadly on Twitter than true news, with falsehoods being 70% more likely to be retweeted.[1]

With generative AI becoming more widely used, that problem is getting dramatically worse. It is now trivially easy to generate content that looks convincing enough to survive a few seconds of scrutiny, which is all social media really asks of anything.

The Rise of AI Slop

Although generative AI has plenty of legitimate uses, it has also become the perfect machine for producing “slop”. AI slop is a term used for low-cost, high-volume AI generated synthetic content designed to farm attention with minimal effort.

In some cases it is harmless, but in other cases it can take the shape of propaganda, scam bait, impersonation, or political manipulation sold as entertainment, all of which can fuel mis- and disinformation. To make matters worse, there are no restrictions in place for AI modification of existing content. Even major platforms are now acknowledging the backlash, leading to newer or niche networks explicitly marketing themselves as refuges from AI slop rather than competing on better content ranking.

However, most platforms have practically no incentive (yet) to fight back against synthetic content.

The Internet Stopped Feeling Real

Reuters Institute’s 2025 Digital News Report found that traditional news media are struggling to maintain engagement and trust, while social and video networks continue to gain influence. In the United States, for the first time, more people reported getting news from social and video platforms than from TV or websites.

The problem is that social media is not exactly built to maintain accuracy. The main goal of most social media platforms is to maximize retention, and to do that, any content that gains maximum engagement is rewarded by the algorithm. Unsurprisingly, posts that provoke an instant emotional reaction (think of ragebait or confusing posts) tend to outperform content that was created more carefully.

Platforms make money from engagement, such as the number of users, impressions, watch time, etc., and from the perception of continued growth. The use of AI allows the rapid generation of content, meaning that engagement is significantly boosted by these tools. Until these numbers look good, they are simply not incentivized to crack down on false or synthetic content. As a result, there are very few meaningful guardrails in place to verify what is being shared, or whether the underlying content has been altered in some form.

Then there is the bot problem, which is where things get worse.

Nobody really knows how many bot or fake accounts exist on the largest platforms, and that is a serious issue. The companies themselves admit these figures are difficult to measure. Meta has repeatedly stated in SEC filings that duplicate and false accounts are hard to estimate at scale and may vary significantly from its internal estimates. Twitter, before the acquisition, said false or spam accounts represented fewer than 5% of monetizable daily active users, while also acknowledging the estimate relied on significant judgment and sampling.

In his attempt to buy Twitter in 2022, Elon Musk publicly argued that the platform had more bots than it disclosed, and used that claim as part of the broader pressure campaign around the deal. In March 2026, a California jury found that two of Musk’s May 2022 tweets questioning Twitter’s spam-account figures were materially false or misleading in a shareholder case over investor losses. That alone shows how economically consequential these metrics can be. Even without clear proof, uncertain bot estimates were treated as credible enough to influence a $44 billion deal.

The Mental Toll of Synthetic Feeds

Another thing worth mentioning here is the psychological damage of the overconsumption of mis- and disinformation.

Social media platforms are like slot machines. They are designed to keep consumers there as long as possible. Users are exposed to a relentless stream of high-energy content specifically tuned to hijack attention and trigger a response. It is not an accident that people feel mentally fragmented after spending too much time in these environments. The system is built that way.

As Anna Mikeda put it in our BOOM ROOM interview, AI slop functions “like junk food, but on a deeper level.” In her view, the danger is not just that this content is low-quality or repetitive, but that repeated exposure “rewires our dopamine pathways in a deeper way.”

This is especially concerning given how quickly generative AI is becoming embedded in everyday life. Deloitte’s 2026 Digital Consumer Trends report found that 61% of people in the Netherlands have already used GenA. Deloitte also found that only 20% believe GenAI is unbiased.

AP reported in January 2026 that AI slop has become so widespread that platforms are now introducing controls to let users reduce the amount of AI-generated content in their feeds. TikTok alone said there were at least 1.3 billion clips on its platform labeled as AI-generated.

Data Authentication as Digital Self-Defense

So yes, there is clearly a problem here. If companies do not fully feel it (yet), users certainly do. The obvious question, then, is how we deal with the spread of false content.

One part of the answer is that we need a way to verify where the data in front of us actually came from – i.e. we need a method of authentication.

Data authentication refers to the identification of data. In a digital environment, that data can be almost anything, such as a photograph, a video, a song, a voice recording, a piece of text, or even the digital profile responsible for generating and distributing it. As long as something exists as 0s and 1s, it can be authenticated.

The central objective of data authentication is to establish some form of identity behind the data. That identity should include the origin of the content, the context in which it was created, whether it has been altered, and the chain through which it has moved across digital systems. The authentication method should create a reliable way to connect digital content to its source and history in an environment.

The central objective of data authentication is to establish some form of identity behind the data. That identity should include the origin of the content, the context in which it was created, whether it has been altered, and the chain through which it has moved across digital systems. The authentication method should create a reliable way to connect digital content to its source and history in an environment.

The Search for a Fix

Any serious attempt to address the misinformation and disinformation problem has to begin with stronger authentication, and that comes with trade-offs. The part many people will not want to hear is that it likely means giving up some degree of privacy.

That is not ideal, but neither is the alternative. We are moving into a digital environment where trust can no longer be assumed. If trust is the goal, then some form of verifiable identity has to sit underneath the data.

That said, the success of any authentication system will depend on more than technical feasibility. It will depend on whether adoption is commercially realistic, and whether users have any real incentive to take the additional steps required for the system to work.

First, let’s look at how these solutions can be implemented at different layers.

Device Layer

One approach is device-layer authentication. In this model, data is authenticated when it is first captured. For example, when a camera records an image or a microphone records audio and writes that data to storage.

In theory, this is a strong model, but it runs into a major limitation. Authentication often degrades the moment content enters post-production. The instant a file is edited, the original attestation becomes more complicated to preserve. This does narrow the effectiveness of this approach.

There is also a business issue here. Hardware manufacturers are not always the natural owners of this problem. For a company making devices or hardware, the commercial incentive to build robust authentication infrastructure into the system may be limited unless demand or regulation makes it worth the effort.

Take a company like Sony. From a purely technical perspective, it could play a meaningful role in device-layer authentication. But from a commercial perspective, this is not obviously its problem to solve. Sony sells sensors and consumer electronics. Its incentives are tied to hardware performance or ecosystem loyalty, and not to cleaning up the epistemic collapse of social media.

Application Layer

So far, this seems to be the more viable path, at least in the near term. Application-layer authentication means solving the problem at the point of sharing rather than at the point of creation.

One way to implement this is through cryptographic authentication, potentially supported by blockchain-based infrastructure. This is attractive because cryptographic systems are well-suited to proving authorship and preserving a tamper-evident chain of custody.

This approach creates accountability inside the network where the content is distributed and the system does not need to prevent every false statement from existing. This would establish who introduced a piece of content, the way it spread, any modification attempts, and accounts linked to that process.

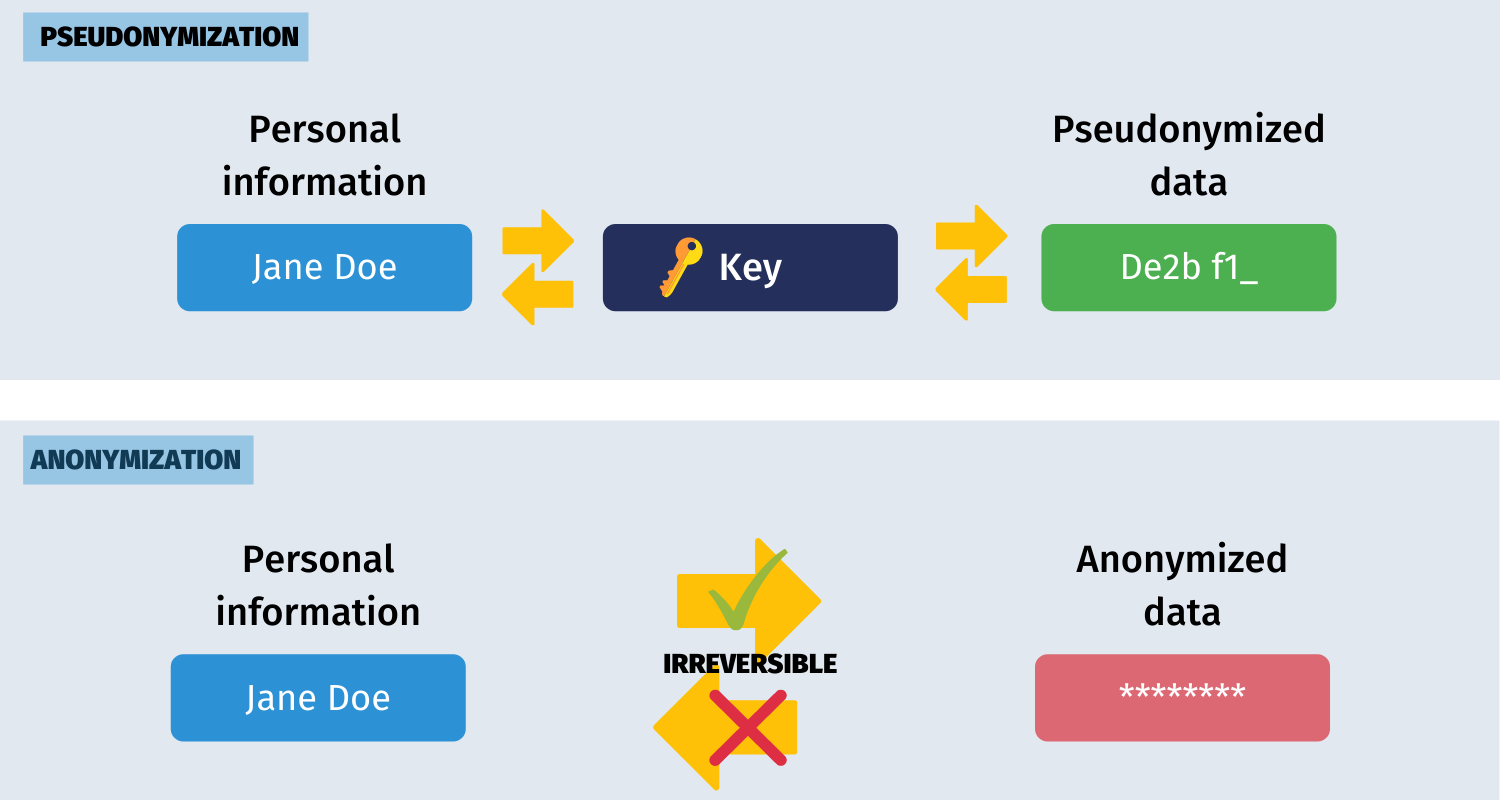

This model also has a second advantage. It addresses the account problem alongside the content problem. If platform participation is tied to cryptographically authenticated identities, even under conditions of pseudonymity, then bot activity becomes much harder to scale. Accounts do not necessarily need full KYC. Realistically, mandatory KYC for every user would be commercially toxic and politically controversial. But pseudonymous verification (pseudonymization) offers a more workable middle ground. Users can retain a degree of privacy while still operating within a system that distinguishes persistent, accountable identities from disposable or automated noise.

That can open the door to a more gradual trust model. Exposure to non-KYC’d or weakly authenticated accounts can be limited. And importantly, distribution privileges can be tiered according to verification status. The repeated sharing of false or manipulated content can trigger restrictions, or even the loss of posting rights. While none of this eliminates bad behavior entirely, it would overhaul the framework social posting works.

The Trade-Offs of a More Trustworthy Internet

Unsurprisingly, such a shift would come with a number of trade-offs. Stronger authentication adds friction to systems whose main appeal – and, arguably, one of their most addictive features – is the speed and ease with which users can participate.

What companies would see is that more verification means more infrastructure and potentially slower growth. It may also expose something they would rather hide, which is that some portion of a platform’s apparent activity may not survive real scrutiny. Cleaner systems can mean weaker-looking numbers in the short term, and that is not something every company wants to discover publicly.

However, this is a double-edged sword. If platforms continue moving in their current direction, further trust erosion is almost inevitable, and that will eventually become a revenue problem. Advertisers do not want to pay for fake reach or environments saturated. In that sense, authentication can become a way to protect audience quality and the credibility of the platform. It may also force platforms to rediscover organic growth and higher transparency about their metrics.

The user side is more complicated. A more authenticated internet may be more trustworthy, but it can also become more invasive to personal data. Any system that ties identity more closely to participation raises concerns around privacy and surveillance. That is why the goal should not be total exposure of real-world identity, but finding a way to introduce enough accountability to make manipulation harder.

Does Authentication Have Product-Market Fit?

The question is whether any of this actually has product-market fit. In principle, the answer is yes. In practice, adoption will likely be driven by the pain points of companies. More specifically, it will be driven by financial pain or reputational pain.

Money, Trust, and Operational Pressure

The first major driver is money.

Platforms can tolerate a surprising amount of informational decay as long as engagement remains high and revenue continues to flow. But that tolerance has limits, and the high number of bots, synthetic engagement, impersonation, fraud, and AI-generated slop will eventually begin to undermine advertiser confidence – a major revenue generator of these sites.

The second driver is trust erosion.

For a while, platforms were able to survive in an environment where users broadly understood that some content was misleading, some accounts were fake. However, once enough users begin to feel that everything is potentially fake, the platform itself becomes harder to use. The social contract will slide into “assume nothing is real unless proven otherwise.” That is a terrible basis for a content ecosystem, and eventually it becomes a terrible basis for business as well.

The third driver is operational necessity.

The more content a platform hosts, the less viable it becomes to rely on moderation alone. Human review does not scale. Even AI-assisted moderation can only do so much if the underlying system has no reliable chain of custody attached to content or accounts. Authentication can change the underlying structure of the network so that we do not have to adjust moderation.

The Most Likely Path to Product-Market Fit

Product-market fit is most likely to emerge where the pain is already concentrated. This involves environments where identity quality matters, where content provenance affects platform trust, and where synthetic activity creates measurable business risk. Social platforms are the clearest example. They already bear the reputational and commercial consequences of fake accounts and synthetic engagement. In that context, authentication is a response to an increasingly visible market failure.

So while hardware-level authentication may remain a valuable but niche infrastructure layer, application-layer authentication is where adoption is more likely to accelerate first.

The Winners of a Verifiable Internet

If this market develops the way we expect, it will reward companies that treat verifiability as infrastructure rather than as a moderation afterthought.

The most obvious winners would be new social platforms that launch with a clear focus on authentication and accountable participation from day one. If trust becomes a real point of differentiation, then platforms built around verifiability could position themselves as a direct counter to the current feed model.

There is also a strong opportunity here for existing companies building crypto wallet infrastructure. Wallets already function as persistent digital identifiers, and they are well-positioned to evolve into lightweight authentication rails for online accounts and content. Whether that means a wallet address serving as an immutable identity layer, or small economic costs attached to posting and distribution, the broader point is that these systems can make participation more legible and abuse more expensive.

The same applies to infrastructure developers working on wallet, decentralized Identifiers (DIDs), and provenance tooling for social applications. If platforms decide they need stronger identity and chain-of-custody systems, they are unlikely to build all of that from scratch. Companies that can offer frictionless infrastructure for account verification and content traceability will be well-positioned in the future.

The market is also likely to reward developers who can solve the more difficult product problem of how to verify multimedia inside applications without making the user experience unbearable. That is probably where the most valuable infrastructure will sit.

The hardware side is more experimental, but still interesting. Any company that moves seriously into hardware-level data verification could end up doing more than selling devices. If authenticated capture becomes valuable enough, hardware companies may have an opportunity to evolve from manufacturing businesses into platform businesses, with control over both the device and the trust layer attached to the data it produces.

What Happens Next?

The internet has reached a fairly absurd point in its evolution, where seeing is no longer believing, and hearing is not far behind.

Data authentication offers a way to restore some structure to the old internet by linking content to a traceable source and preserving a record of how it moves through digital systems. The approach comes with trade-offs, especially around privacy and adoption, but the pressure to address the problem is likely to grow as trust continues to erode and platforms face clearer commercial consequences.

However, if proving what is real remains optional, then the internet will continue rewarding the exact thing it should be punishing, which is synthetic disinformation at scale.

Continue the Conversation

Whether you want to tune in, join us as a speaker on a podcast or event panel, or stay up to date with the latest in tech, Arcanum Ventures is here for you. We are passionate about exploring and discussing the most interesting developments shaping the space.

Arcanum Ventures also advises founders and teams building in complex, high-stakes environments, from privacy tech and Web3 to data infrastructure and token design.

If you are building something concerning data-verification and want a second set of experienced eyes, we want to work with you!

References

[1] S. Vosoughi, D. Roy and S. Aral, Science, 2018, 359, 1146–1151, DOI: 10.1126/science.aap9559

Arcanum Ventures

Arcanum Ventures is a venture capital investment firm, blockchain advisory service, and digital asset educator. We bring precise knowledge and top-tier expertise in advising blockchain startups.

Arcanum demystifies the blockchain space for its partners by providing intelligent, poised, crystal clear, and authentic input powered by our passion to empower and champion our allies.

We unravel the mysteries and unlock the opportunities in blockchain, Web3, and other emerging innovations.

March 17, 2026

Hardware-native AI moves intelligence from the cloud directly into devices. From AI PCs and smart glasses to…

March 3, 2026

Specialized tokenized instruments represent the next evolution in digital capital markets. Moving beyond…

February 10, 2026

In 2026, tech investing is no longer about chasing hype cycles. It is about identifying durable narratives…