Arcanum Ventures

Arcanum Ventures is a venture capital investment firm, blockchain advisory service, and digital asset educator. We bring precise knowledge and top-tier expertise in advising blockchain startups.

Arcanum demystifies the blockchain space for its partners by providing intelligent, poised, crystal clear, and authentic input powered by our passion to empower and champion our allies.

We unravel the mysteries and unlock the opportunities in blockchain, Web3, and other emerging innovations.

Why Robots Are Taking Over Industry Before They Master the Basics

“Go that way! You’ll be malfunctioning within a day, you nearsighted scrap pile.”

– C-3PO from Star Wars Episode IV: A New Hope –

This iconic line comes from an argument between C-3PO and R2-D2 after landing on Tatooine, when the two droids immediately disagree on which direction to take in the desert.

However, the line could just as easily come from a robotics engineer working on his latest prototype in 2026.

We are all familiar with those humanoid robot demos by now. Robots pick up objects and put them on different tables, or do a barrel roll, show off some fighting skills, etc. The interaction appears quite impressive, suggesting that general-purpose robotics is no longer a question of if, but when.

However, real-world applications so far have been… less convincing.

Outside of curated environments, performance can degrade quickly as small variations, such as lighting conditions, object placement, and surface texture, can introduce enough uncertainty to disrupt execution. Encountering these variations, the system simply miscalculates or completely stops doing its tasks. I.e., give a machine too much uncertainty and one wrong decision point, and it might be “malfunctioning within a day”.

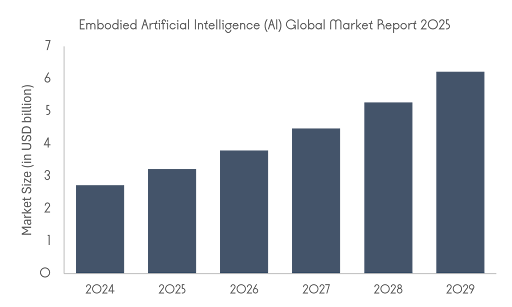

Robotics and embodied AI have certainly come a long way, though, and seem to be considered the next technical revolution. Despite the current technical challenges and limitations, governments around the world are committing billions to AI and robotics, with embodied systems increasingly treated as a strategic priority.

So why the enormous geopolitical and economic appetite for this technology?

Robotics is a Race to Remain Competitive

Governments are investing in robotics and embodied AI for the same reason they invest in energy or other novel technologies: competitive advantages.

In 2026, these systems are a part of critical infrastructure. Investment in robotics affects industrial productivity, adds to supply chain resilience, and also helps with labor shortages. The European Commission, for example, states that the EU is investing more than €1 billion per year in AI through Horizon Europe and Digital Europe, with the explicit goal of scaling strategic technologies across the economy.

There is also a labor and demographic reason. Countries with aging populations and manufacturing pressure are looking at robotics as a way to sustain output when human labor becomes harder to find. Japan’s Ministry of Economy, Trade and Industry has continued to position robotics in areas such as long-term care and responses to regional labor shortages. In these cases, robotics is becoming an economic necessity, especially in sectors where labor constraints are already visible.

The United States approaches the issue through research capacity and technological leadership. The National Science Foundation continues to fund foundational robotics research as a national priority.

China’s position highlights the industrial side of the equation. Recent official reporting points to rapid expansion in robotics deployment and smart factory development, making robotics a manufacturing and industrial policy priority.

With this level of funding flowing into the field, robotics and embodied AI are advancing quickly. However, the engineers and scientists building these systems still face an unusually difficult set of technical challenges to solve.

From Chatbots to Physical Bodies

If robots are expected to operate in the real world, they need some capacity for reasoning. AI can make that possible, but training for physical environments is far more difficult than, for example, just a chatbox, because the system has to interpret a much wider and messier range of inputs.

This is where Vision-Language-Action, or VLA, models come in. These models try to connect different inputs, such as vision, language, and motor controls. This way, a robot can take visual input, interpret a natural-language instruction, and generate actions to carry it out. A good example of this is Google’s DeepMind’s RT-2, which was designed to combine web-scale vision-language knowledge with robotics data, so that semantic understanding could improve robotic control.

However, the more parts you have to optimize for, the harder it is to assemble the entire functioning robotic model. Even just the operation of a robotic arm can require tremendous amounts of training.

Hands Are Harder Than Brains

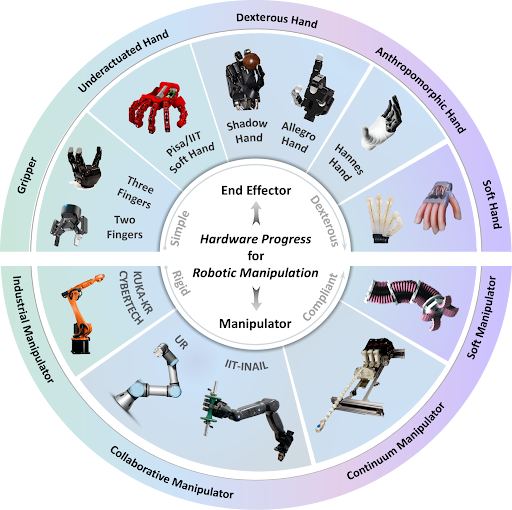

Object manipulation remains one of the toughest challenges in embodied AI. Even when a robot can interpret an instruction correctly, carrying it out in the physical world is another matter entirely. Tasks that involve grasping or handling objects are still highly sensitive to small variations and real-world uncertainty.

This is why the topic of manipulation remains such a useful reality check for the broader embodied AI narrative. A robot may be able to interpret an instruction correctly and still fail at the physical part of the task. Unsurprisingly, OpenAI’s early Dactyl work shows how training of a dexterous hand requires massive amounts of simulation and careful transfer to the real world.

And that kind of data at that scale is hard to come by.

Data Is the New Oil, But Harder to Extract

One reason robotics has progressed more slowly than language AI is that it does not have an internet-scale corpus ready to use. Robotics data has to be collected through physical interaction, which makes it far harder to standardize and collect.

Right now, robotics relies on a mix of teleoperation, simulation, and video learning. Teleoperation can produce high-quality demonstrations, but it is labor-intensive. Simulation is cheaper and faster, but the gap between virtual environments and the real world remains a persistent problem. Video is attractive because it offers more scaling opportunities, but it may not fully capture contact forces or the control signals needed to reproduce the action.

Therefore, companies are getting more creative in how they collect this data. In late 2024, Niantic revealed that it was building a Large Geospatial Model using player-contributed scans of public locations from Pokémon GO and related apps.

Niantic stresses that these scans were optional and tied to specific public locations, which is true, but that does not make the setup any less convenient for the company. Millions of users were effectively helping build commercial spatial infrastructure under the banner of a game. All to contribute to the growing number of robots in our economy.

Still, more data does not settle the more practical question. What kind of robot is supposed to operate in the world that data is modeling?

Build the Robot, or Rebuild the World?

Now, there is a kind of chicken-or-egg problem here: should companies build warehouses and other environments around the needs of robots, or build robots that can operate effectively in spaces designed for humans?

Narrow, Vertical Robots

Narrow, vertical types of robots are increasingly being deployed as part of larger operational systems in existing warehouses, factories, and logistics networks. The real value often comes from the orchestration of fleets, software layers, inventory systems, and workflows designed around continuous machine activity rather than isolated robotic tasks. These units are designed to fit existing spaces, but adaptations of the environment are necessary. As a solution, many new warehouses are built with automation and robotic work in mind.

The International Federation of Robotics reported that industrial robot installations reached a record high in 2024, with 295,000 units installed in China alone, and global demand in factories had doubled over the past decade.

Amazon, for example, says it has deployed more than 1 million robots across its operations network, while systems such as Sequoia are designed to reorganize how inventory moves through fulfillment centers rather than simply adding one more machine to the floor. In that sense, the infrastructure is becoming robotic, not just the equipment.

The Humanoid Form

The humanoid form becomes attractive when the environment is too expensive to redesign.

The humanoid form is often presented as a breakthrough in itself, although the more practical explanation is that human-shaped machines can slot into spaces already built for human movement and labor. Agility Robotics argues that the world was designed for people and that useful robots therefore need to function in those spaces.

Companies are raising large rounds around the category, and manufacturers are beginning to test humanoids in production environments. In February 2026, Bloomberg reported that Apptronik had raised about $520 million at a valuation above $5.5 billion, while BMW said its Figure 02 deployment in Spartanburg had supported production of more than 30,000 BMW X3 vehicles over ten months. Tesla says it has fine-tuned a “production-primed” Optimus design and has been installing first-generation production lines for the robot. We talk about this and other interesting, or maybe ambitious, robotics and embodied AI plans in the following video.

At first glance, this makes the form factor look inevitable. However, that is not quite the right conclusion. Humanoids are attractive largely because the world has already been designed for humans. Warehouses, factories, tools, shelving, stairs, and workstations all assume a body with two arms, two legs, and human reach. Agility Robotics describes the humanoid form as useful because it can operate in human-centric environments without extensive retrofitting.

What’s Coming Next

The next phase of robotics is likely to be narrower than the marketing suggests. Growth is already happening in warehouses and logistics, where tasks are repetitive and the business case is easier to measure.

We also expect to see more automation in industrial settings. More mobile systems in warehouses, with more pilots involving humanoids, where existing infrastructure makes a human-like form useful.

Reuters reported in January 2026 that Hyundai plans to deploy Boston Dynamics’ Atlas humanoids at its Georgia plant from 2028, beginning with high-risk and repetitive tasks in manufacturing.

As for household robotics, there will likely be fewer immediate breakthroughs because of the complexity of the home environment, which has system requirements that are harder to meet.

Instead, what comes next is most likely a continued expansion of robotics into environments that can support them operationally. The systems will improve, but the pattern is likely to remain the same for now, with industry first, and broader autonomy only where the economics and the environment make it feasible.

However, an important question follows this. If robotics is becoming part of critical infrastructure and industrial strategy, what does that mean for the economy and for human work?

As deployment scales from controlled pilots to critical infrastructure, the conversation shifts from what robots can do to what that means for the people working alongside them.

Are They Here to Take Our Jobs?

When it comes to robots taking our jobs, it is not too far from fiction anymore. However, the economic case for robotics is strongest in sectors where labor is hard to secure to begin with.

The International Federation of Robotics says companies are adopting automation mainly to strengthen supply chains and respond to structural labor shortages. That is consistent with the broader employer picture as well. In the World Economic Forum’s Future of Jobs Report 2025, 58% of employers identified robotics and automation as a transformative force for their business by 2030.

The labor impact is more mixed than either boosters or critics usually admit. Research by Acemoglu and Restrepo found that industrial robots reduced employment and wages in exposed US local labor markets. More recent synthesis work points to a picture that robot adoption tends to support productivity growth and lower output prices, while wage and total employment effects vary by sector, geography, and the kinds of workers exposed to automation.

That means the real consequence is likely to be reallocation, not an extreme case of mass unemployment. However, it is expected that some jobs will be automated, especially repetitive physical and procedural work. In addition, some jobs will change, with new demand emerging around maintenance, systems integration, supervision, data operations, and AI-related skills. The IMF’s recent work on new skills and AI suggests that AI-related skills can raise wages, but also deepen labor-market polarization and reduce employment in AI-vulnerable occupations in some regions.

There is also a geographic consequence. Robotics can make production less dependent on low labor costs, which affects where manufacturing happens and how supply chains are organized. OECD work suggests industrial robotics can slow offshoring in developed economies by reducing the importance of labor costs in production decisions. This is significant, as robotics will likely also reshape where factories are located in the first place.

So, Where Does the Robotics Industry Stand?

For all the hype, robotics is still far from mastering the messy complexity of the real world. Yet that has not slowed the race to build and deploy these systems, because the stakes are high with economic and geopolitical interests as well.

The real revolution, then, may not arrive as a sudden wave of humanoids in every home. We will first see a gradual reshaping of industry and the labor around machines that still have a bit of a weak grip on most things.

Nonetheless, we are entering a very exciting technological era, and Arcanum Ventures will be here to report on the major milestones in the space.

Don’t Let the Conversation End Here

If this is the kind of conversation you want more of, there are several ways to stay in orbit around Arcanum Ventures.

You can listen to our panels, join us as a speaker, or reach out directly if you are building in a space where the stakes are high and the problems are not simple.

We work with founders and teams operating at the edge of complexity, across areas like privacy tech, Web3, data infrastructure, and token design.

If you are building something ambitious and want serious feedback, let’s talk.

Arcanum Ventures

Arcanum Ventures is a venture capital investment firm, blockchain advisory service, and digital asset educator. We bring precise knowledge and top-tier expertise in advising blockchain startups.

Arcanum demystifies the blockchain space for its partners by providing intelligent, poised, crystal clear, and authentic input powered by our passion to empower and champion our allies.

We unravel the mysteries and unlock the opportunities in blockchain, Web3, and other emerging innovations.

June 2, 2026

AI has expanded the scale of what gets collected about you far beyond what most people intuit. But each…

May 19, 2026

For most of finance's history, the best yield sat behind layers of institutional gatekeeping. Tokenized…

April 28, 2026

Prediction markets are platforms where collective belief gets a price tag. From elections to geopolitical…